The first thing I do every workday is grade the assignments of a team of robotic subordinates.

cover

The 9 AM Inbox

The first thing I do every workday is batch-process whatever the AI left behind overnight.

Reports generated while I slept. PR drafts auto-opened on the codebase. Meeting notes the Agent assembled and tagged me into. Tickets stuck at some decision point waiting on my call. And a handful of escalations: "I think you should look at this one."

Coffee in hand, about 40 minutes. I go through them one by one. This PR is going the wrong way, reject. This report has the right thesis, just needs two more numbers. This ticket can run through automated repair. That customer complaint has a thread under it I want to pull myself. Then humans and AI go their separate ways for the rest of the day. I head to meetings. It goes off to do work.

I've been doing this for the better part of a year. The other day it hit me: the first hour of my workday is essentially managing a team of machine subordinates.

I sat there feeling slightly disoriented. When I manage humans, I have to read their emotions, give feedback, plan their careers. When I manage these machine subordinates, all I do is approve, reject, redirect, dispatch. The latter is an order of magnitude more efficient. It's also colder. Two ways of working are slowly diverging inside me.

Over the past six months I've been mapping out what I've seen, built, and broken along the way. This map isn't an academic framework. It's more like a sketch someone draws for themselves at 2 AM when they can't sleep, trying to make sense of the change happening around them. The questions it answers: where are we actually, what's still ahead, which traps are real, and which hype is fake.

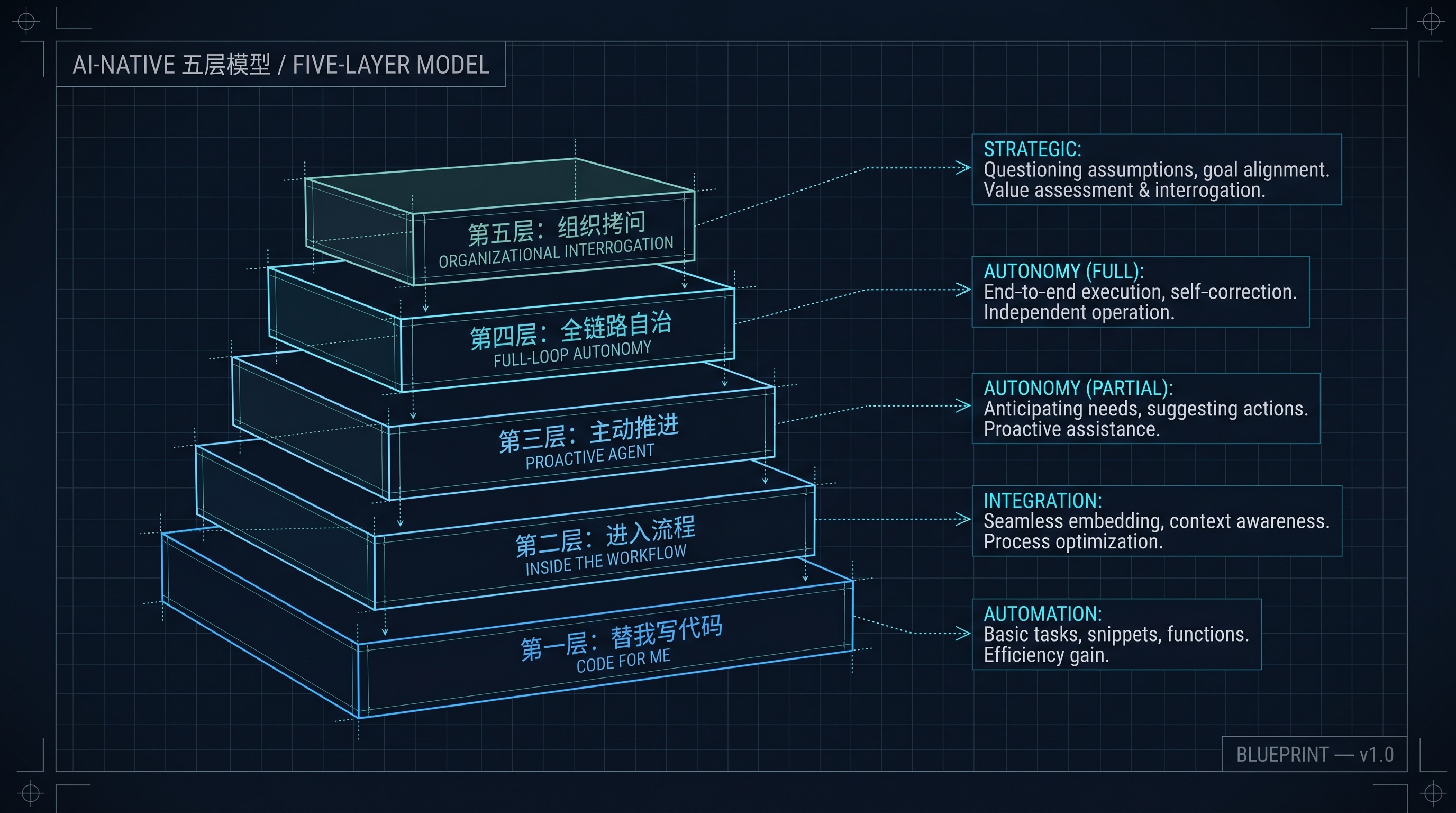

I call it the five-layer model of AI-Native.

The Five-Layer Model of AI-Native

Layer 1: Writing Code for You

The earliest use of AI was personal. People delegated their own work.

The clearest case is code. Once Cursor stabilized, my engineers basically stopped writing first drafts by hand. Requirements come in, they have a conversation with the AI, get a draft, then refine it. Same with documents. Weekly meeting notes drafted by AI, then polished. Review materials drafted by AI, then re-thought.

The fundamental difference between Layer 1 and "before AI"? The tool changed. The work didn't. People are doing the same things, just with a more efficient executor. Same kind of shift as moving from Vim to VSCode, or from SVN to Git.

Its boundary is also clear: AI only serves "me," the individual. It doesn't know what big project our department is working on, what request the team next door submitted last week, or why my boss is angry today. It's a very capable tool sitting on my desk.

The unexpected side effect: my engineers' coding time dropped from 6 hours a day to 2. Where did the other 4 go? Meetings. Alignment. Politics. Approval workflows. After Layer 1 lands, you'll hear engineers complain about meetings.

The complaint is valid and also wrong. What AI took away wasn't 6 hours of coding. It was 6 hours of "this logic is complex, I need to focus." With AI knocking out that logic in half an hour, the engineer has to actually face the meetings.

At Layer 1, the organization is the same organization it always was. AI just lights up on each engineer's desktop, individually.

Layer 2: Inside the Workflow (where we are now)

The mark of Layer 2 is that AI moves from serving individuals to serving processes.

Let me paint a concrete picture of what this looks like.

Our department's Lark groups used to have a few internal-support people as the busiest role. Starting at 9 AM, PMs, QAs, and account managers would throw questions in: "Has the launch plan changed?" "Where is that customer's compliance approval?" "Who got assigned that P3 ticket from yesterday?" 80% of these questions were repeats. 20% had standard answers. Only a small fraction actually required judgment. That repetition used to consume several headcount.

Now we have a Q&A bot in those groups. It reads the knowledge base, the ticket system, the release calendar. Most repeat questions get answered directly. It also takes meeting notes, syncs them into Lark Docs, and tags the relevant people. It pre-processes IT tickets. Simple things like resetting a test account or granting a development permission, it handles itself.

The fundamental difference between Layer 2 and Layer 1? AI moved from "personal desktop" into "team infrastructure." It no longer serves a single engineer. It serves the workflow. Its inputs and outputs are embedded in the team's daily collaboration.

But it has a clear ceiling: it's still purely reactive. You ask, it answers. Otherwise it sits there.

I figured out where that ceiling was during a production incident.

The root cause was buried in a Lark message an engineer had posted two weeks earlier. In a project group with 40-some people, he'd casually said: "I looked at this change. The reconciliation logic on Y business line might be affected." Some people sent a quick "got it." Some didn't see it. He himself forgot.

Two weeks later, Y business line's reconciliation actually broke. Customer complaints. Compliance escalation. The trail led back to me. The retrospective stung. The AI had "seen" that message all along. It had taken the meeting notes for that project. It had handled IT tickets in that group. It had even translated that message for the overseas team. But it had no concept of recognizing risk.

It processes messages without reading them. It assembles minutes without understanding which decisions in those minutes have no follow-up. It answers questions without asking "why hasn't anyone asked this before?"

That's where Layer 2 stops. It frees your hands. It doesn't free your eyes or your judgment.

This is mostly where we are. The wins at Layer 2 are easy to claim. Stand up a Q&A bot, drop in a meeting-notes tool, ROI shows up in two weeks, the slide deck for the boss writes itself. So plenty of teams stop here and call themselves "AI-Native."

They've installed a smarter customer service rep.

Between Layer 2 and Layer 3: The Real Wall

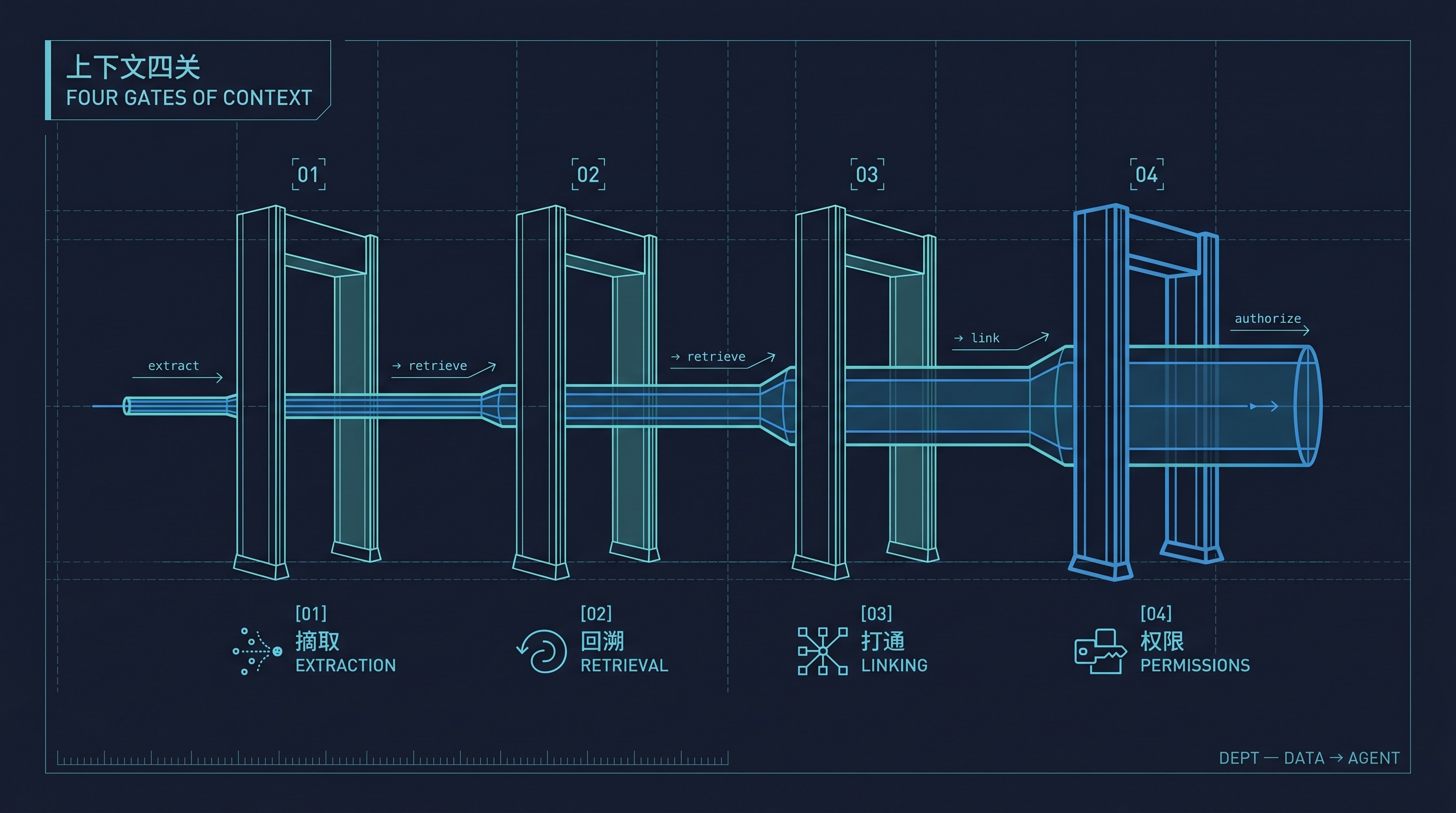

The gap between Layer 2 and Layer 3 hides one obstacle most people don't articulate: context.

"Context" decomposes into four progressively harder problems. Let me walk through them.

The Four Gates of Context: Extract, Trace, Connect, Authorize

Step One: Extraction

Before the AI can do anything, it has to know what's actually happening.

A customer issue comes in. What the AI needs to grasp is the entire story behind that single message: who is this customer, what have they complained about in the past three months, what's their contract tier, who last touched the feature they're complaining about, was the change properly reviewed, did monitoring fire any alerts at deploy time?

That information lives in CRM, the ticketing system, Git, Jira, monitoring, the release system, Lark groups, and Lark Docs. Each system has its own API, its own field naming, its own permission model. The "customer" entity alone is called customer_id in CRM, account in the ticket system, "customer number" in the contract system, and "entity code" in the compliance system. Four ID schemes. Nobody can give you the complete mapping.

Just getting the AI to extract "what just happened" requires a massive amount of translation work first.

Step Two: Retrieval

The present isn't enough. The AI has to dig backwards.

When an error log surfaces in production, the information that actually determines how to fix it might be buried in an architecture review meeting from three months ago. That edge case got discussed back then, a workaround was chosen, a TODO was left, nobody followed up.

Asking the AI to retrieve means it has to walk back from today's symptom to that meeting, that document, that decision, that specific person. The longer the retrieval chain, the more times the AI has to "jump." Each jump reloads context. Each jump is a non-trivial cost. Not just in tokens. Each hop also risks losing critical signal or wandering off course.

Step Three: Linking

You've extracted, you've retrieved. Now connect them.

Knowing "this customer raised this issue" isn't enough. The AI has to link this customer issue with last week's code change, with the monitoring curve from that day, with the compliance constraints involved, with the priority ranking in the product roadmap. It has to see the whole picture in one frame.

This is brutally hard because those five tables belong to five different departments: customer service, engineering, ops, compliance, product. Each department has its own data governance standard. Some department's core data isn't even digitized; it lives in some director's head.

The actual work is data integration, schema unification, and cross-department permission coordination. Almost none of it has anything to do with training models.

The irony is that the difficulty isn't AI's fault. This is a "data platform" problem we should have solved a decade ago. We got away with deferring it. AI has finally made the cost of fragmented context impossible to defer: yesterday it just meant a few extra arguments in meetings; today it deadlocks your Agent.

Step Four: Permissions and Organizational Gravity

The last step is the hardest, because it isn't a technical problem at all.

The AI needs cross-system data access, and access requires permissions. An Agent that wants to read CRM data needs customer service to authorize it. To read code in Git, it needs engineering's authorization. To read compliance records, it goes through legal. Every additional layer of org structure is another door.

And these doors were designed for humans, not Agents. When you grant a permission to an engineer, their manager signs off, their job description backs it up, and if something goes wrong you know who to go to. When you grant a permission to an Agent, who signs? Who's accountable? Who answers when it breaks something?

We had a discussion in our department about granting an Agent read-only access to production. Three meetings. Compliance, security, ops, legal all in the room. We didn't do it. The technology was perfectly capable. Nobody was willing to put their name on the dotted line.

The deeper the org hierarchy, the higher the cost for AI to traverse context. In a 30-person startup, AI getting any data is one config line. In a 3000-person business line, AI getting the same data crosses three departments, five tables, eight approval nodes.

This is organizational gravity, not a technical problem.

In our dev environment, the "log to code" loop is fully wired. The AI reads an error log, links to the relevant code, drafts a fix PR. End-to-end, it works beautifully on test data. We still don't trust it in production. The data pipeline has dead spots. The permission model isn't ready to grant production read access. The accountability question isn't settled.

This wall has held us up for about two quarters. I now understand why so many large-company AI projects end as "internal demos." The bottleneck isn't technology. It's that context can't traverse the org.

Layer 3: Where It Gets Surreal

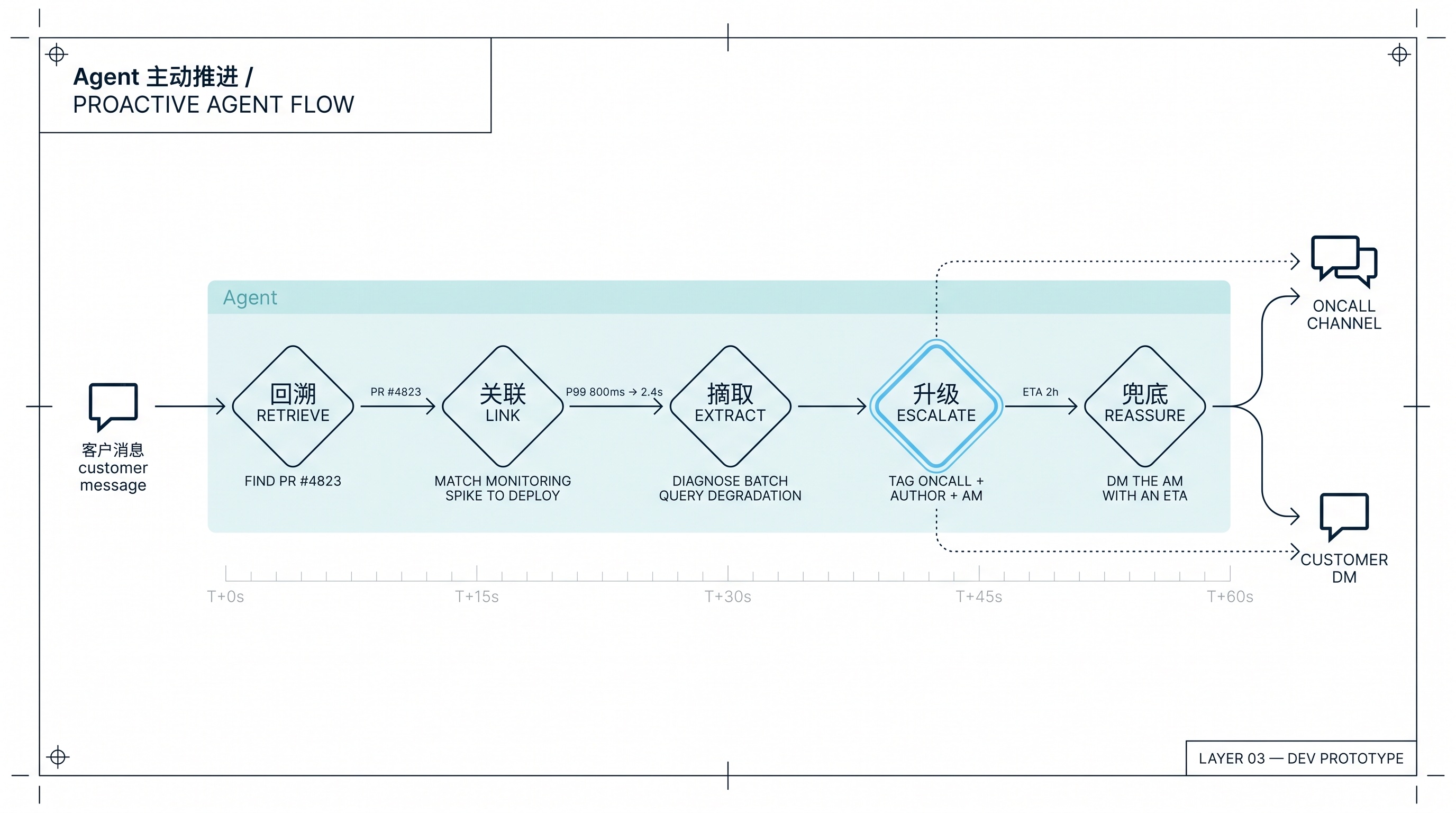

Agent Proactivity: From Customer Message to Self-Escalation

If you can clear the context wall, Layer 3 starts becoming real.

This layer still feels unreal to me, even now. Building it isn't actually that hard once the plumbing is in place. What surprises me is the picture you see once it's running.

Here's what I want to see, and what we're starting to see in dev:

One morning, an account manager forwards a customer complaint into Lark: "Your XX feature has been slow this week. Our backend batch job keeps timing out."

In the past, this message would start a long relay race. Account manager tags PM. PM tags engineering lead. Engineering lead asks the group, "anyone know about this?" Someone remembers a code change last week, tags the engineer. The engineer pulls up monitoring, digs through release notes, finds the PR, finds the logs. Two or three hours later. Customer still waiting.

At Layer 3 it goes like this: the Agent reads that message (it's in the group, with proper context permissions) and autonomously does the following:

It retrieves: this feature had a release last week. PR #4823.

It links: the P99 for this feature jumped from 800ms to 2.4s. The spike timing matches the deploy.

It extracts: the diff in PR #4823 contains a batch query pattern that probably degrades under high data volume.

It escalates: in the oncall channel, it tags the original author Zhang San, the on-duty oncall Li Si, and the account manager. "I've located this customer issue. Likely related to PR #4823, batch query performance. Recommend escalating to P2. Draft fix is in the PR comments. Please review."

It reassures: in the account manager's DM: "Issue located and escalated. Estimated 2-hour turnaround on a fix proposal. Standby."

This entire sequence happens while people are still sipping morning coffee.

The fundamental difference between Layer 3 and Layer 2? AI moved from reactive to proactive. Layer 2's AI is a "ask and you shall receive" customer service rep. Layer 3's AI grew professional instinct: it identifies risk on its own, pulls in the right people, finds the code, drafts solutions, reports progress upward.

The key inflection point: what really changes is that AI shows professional judgment for the first time, not that AI can write code. That instinct used to take two or three years to develop in a junior engineer. What counts as urgent, who to tag first, when to escalate, when to handle alone. AI has it now.

My one-word evaluation: surreal.

What's surreal? Through Layer 2, every AI action followed a "you click, it moves" pattern. From Layer 3 onward, it acts on its own. When you sit down at your inbox in the morning, you find that half the items already have the prep work done. You're just there to say yes or no, to provide the final judgment.

The boundaries remain. It can draft, but it can't decide. It can escalate, but it can't prioritize. It can tag people, but the person tagged still has to read the context, make the call, and write the code.

And writing code, at Layer 3, still doesn't go back to AI. Not because AI can't write code; it has been able to do that for a while. The hard part is knowing what to fix, where to pull the context from, and how to verify the fix is correct. Code is the easy part. Knowing "what to fix, why, and how to know it's working" is the actual substance of engineering. That still takes a human.

Layer 3 grew AI eyes and a mouth. Not yet hands.

Layer 4: Full-Loop Autonomy (imagined, not yet seen)

About Layer 4 I owe you honesty: we're on the road, and the road is long.

Here's the picture: a production log surfaces an anomaly → Agent catches it → reproduces it → locates the code → writes the fix → writes tests → runs CI → opens the PR → rolls through canary → reads AB data → decides on full rollout → monitors regression → closes the ticket.

End-to-end observable. End-to-end traceable. End-to-end reversible. If anything goes wrong you can see exactly what decision the Agent made at every step, and you can pull any step back to redo it.

The fundamental difference between Layer 4 and Layer 3? AI finally grew "hands." Layer 3 still passes the ball back to humans. It locates, drafts, escalates, then waits. Layer 4 catches the ball and runs the whole track. Not just "identifying the problem." Actually "solving" it.

Going from dev to production hits a stack of unsolved problems.

First: rollback. What happens when the Agent ships the wrong fix? A bad release can mean millions in customer losses, a regulatory compliance incident, reputational risk. In the human world this runs on SRE intuition, on-call instincts, the "let me roll this back fast" reflex. In the Agent world, you have to write all of that down as an explicit protocol. What metric change triggers an auto-rollback? How fast counts as "fast"? How do you reconcile state after the rollback? What if you can't?

Second: accountability. The Agent shipped a bug, the customer took losses, who's responsible? The engineer who approved the PR? The team that deployed the Agent? The company? In any regulated environment this question is lethal, because regulators trace back to individuals. Regulators don't accept "the AI did it."

We had an internal meeting about this. Three hours. No conclusion. There was no technical obstacle. There was nobody in the org willing to put their name on the line.

Third: observation cost. The more autonomous the Agent gets, the less people see what it's doing. By the time you notice it's doing something stupid, it might already have done it ten thousand times. What does this mean in practice? One day you might receive a phone call from compliance telling you the Agent has been quietly committing a small infraction for three months, only just discovered today.

I've previously described this as a "log-driven autonomous repair loop." That's basically Layer 4: let real production data drive the Agent's workflow directly. Humans only show up at critical decision points. But where exactly are the critical decision points, how to define them, who defines them, and who carries the accountability when things go wrong? Those are still open questions for us.

Layer 5: The Final Interrogation

Layer 5 isn't really a new capability. It's a set of questions.

The core question: is your entire enterprise architecture friendly to AI?

The fundamental difference between Layer 5 and the previous four? Layers 1 through 4 ask "how do we use AI to do work." Layer 5 asks whether your company has earned the right to use AI at all.

Sounds dramatic. It's actually very concrete. Broken down:

When a production bug occurs, can the AI see the full event? Logs, traces, user behavior, context: are these instrumented in a place it can read? Or are they scattered across seven systems that even humans can't reconcile? (This is exactly the wall we're stuck at between Layers 2 and 3.)

When a requirement comes in, can the AI know its origin and context? Is this a real customer scenario, a sales promise made to close a deal, or a PM's stray idea? The layers go further: is it a compliance requirement? A regulatory mandate? A casual remark from a senior exec? Has that context been written down somewhere an Agent can find it? Or does it live only in some director's WeChat history?

Can the right issues escalate? Does your Agent have the cross-team, cross-permission, cross-tool passport it needs, or does it hit a wall every time it crosses a boundary? In a pyramid-shaped org, this is brutal: a permission grant takes five approvals, the Agent waits three days for a single hop.

Is your code documentation written for AI? Notice the framing: for AI, not for the next human. Standards differ. Documentation for humans tolerates ambiguity, tribal knowledge, "you know what I mean." Documentation for AI must be explicit, self-contained, and fully contextualized. Most legacy system documentation is illegible to an AI.

Layer 5 is an organizational problem. Technology has very little to do with it.

It means every process, document, permission, and tool in your company has to be re-examined: is this AI-friendly? If not, every capability you built in Layers 1 through 4 will get cut off at the knees here.

I've watched too many AI projects end as "internal demos" or "pilots that quietly faded." They put serious effort into building Agent capabilities, only to find the Agent can't actually run. Log structures inherited from a decade ago. Requirement docs scattered across three different collaboration tools. Key judgment that exists only in one person's head. Cross-department permissions that take three weeks to provision.

The Agent stands there. It can do everything. It can do nothing.

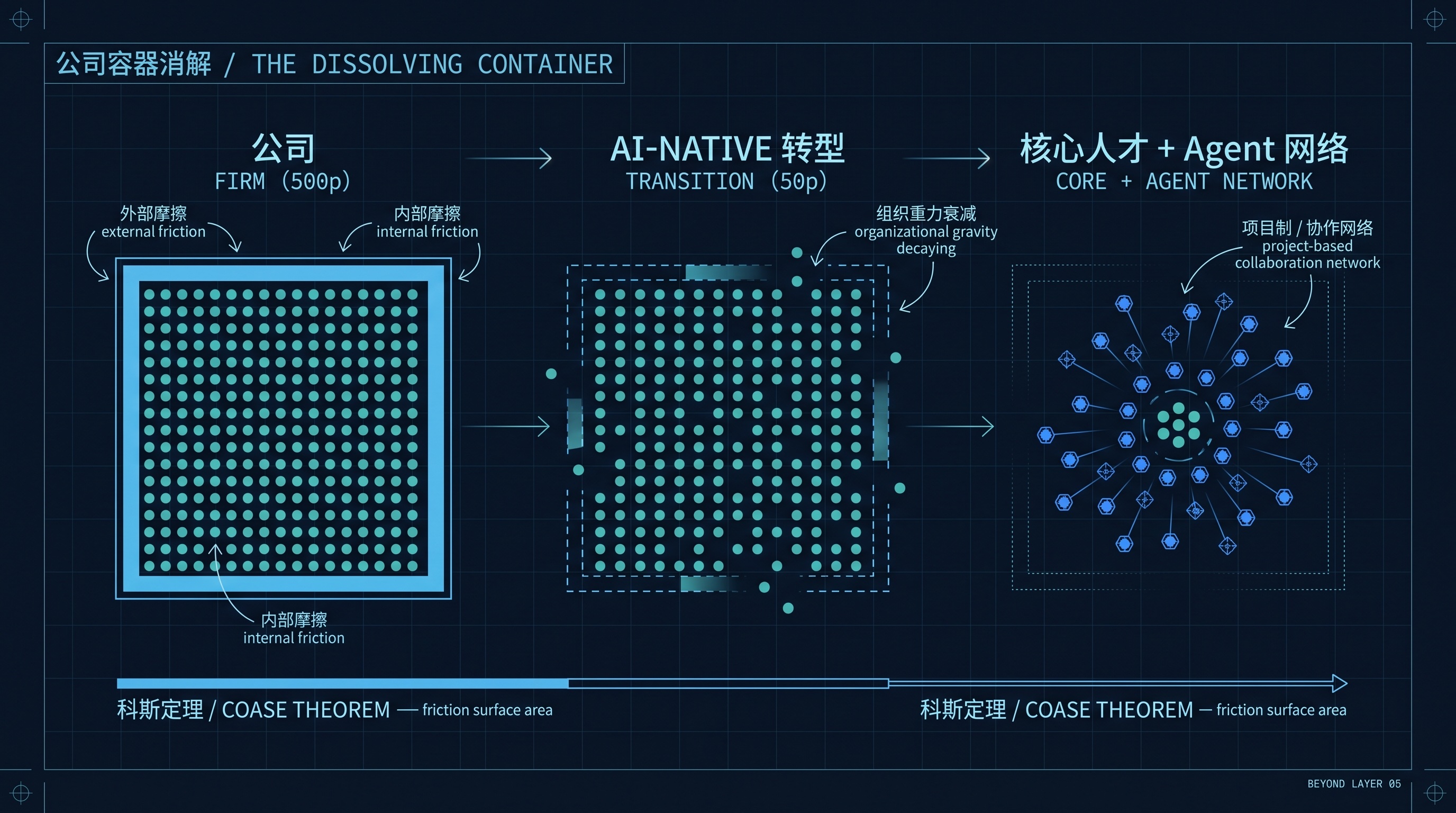

Beyond Layer 5: Do We Still Need a Company?

The Dissolving Container: From Pyramid to Collaboration Network

Layer 5's interrogation isn't actually finished here. If you really do remake your organization into an "AI-friendly" form, something deeper than "efficiency gains" happens: the headcount structure of the company gets fundamentally rewritten.

Let me put a stake in the ground: functional specialization itself may need to be rewritten from the ground up.

The corporate architecture we run today is, at its core, inherited from the assembly line. Break a big task into many small pieces, hand each piece to a specialist. The assembler only assembles. QA only QAs. Procurement only procures. Legal only reads contracts. Compliance only watches compliance. Product only draws product. Engineering only writes code.

Why this division? Because human bandwidth is finite. The amount of context one person can hold in their head is bounded, and so is their attention. Ask a single person to do assembly, QA, and procurement simultaneously and they'll fail at all three. So narrower and more vertical means more efficient. This has been the first principle of organizational design since the Industrial Revolution.

AI doesn't have this constraint.

AI can run ten parallel things you've handed to it without worrying about attention. Its "bandwidth" depends almost entirely on whether the context you give it is rich enough and the rules you've defined are clear enough. The implication: once a person has AI in hand, the territory they can effectively cover expands from one narrow line to a wide area.

The old game asked for depth. The new game asks for breadth.

The old archetype was an expert who drilled all the way to the bottom of one domain. The new archetype is a "deep in one, strong across many" composite professional: someone who deeply understands their own primary field, can hold their own across several adjacent fields, and is fluent enough with AI to run all of those fields in parallel.

The implications for org design are radical.

HR, admin, procurement: these are all transactional process departments at their core. In an AI-Native company, these three could collapse into a 5-person "operations platform" team, with everything else running on AI.

Legal and risk control: both fundamentally do compliance judgment and risk identification. These departments often have hundreds of people each, but the underlying logic is the same. Merging them into one team after AI-Native arrives makes complete sense.

Product and engineering is the most dramatic case. Today my department has PMs, engineers, QA, designers, and ops as five separate lines, with five leads and five reporting relationships. Once you have Layer 3 and Layer 4 AI actually running, the massive "alignment cost" disappears. The PM's mental model gets translated by AI into a code draft. The engineer's diff gets covered by AI-generated tests. The bug found by QA gets traced back by AI to its requirement origin. Five lines collapse into a single "product-engineering integrated" small team. Entirely doable.

The most striking part: most companies don't actually need that many "scientist-grade" specialists. Deep expertise still has value, but the demand for it shrinks dramatically. A mid-sized business line that used to need thirty deep specialists might in the future need five truly top-tier experts plus a roster of "breadth + AI leverage" composite professionals.

I'll be honest: this won't happen overnight. Organizational gravity is too heavy. Process inertia runs deep. But the direction is clear. Within five years, you'll see org charts get flatter, functional boundaries blur, and "uses AI as leverage to amplify output" become an implicit baseline in every job description.

This shift hasn't reached its endpoint. Push one more step and a sharper question shows up.

Is the Company Itself Still Necessary?

Economics has a classical explanation called the Coase theorem: firms exist to reduce the transaction friction that arises when groups of people try to coordinate on the open market.

The argument: if you want to build a car, in theory you could find 100 independent workers in the market and have each one do a piece by contract. In practice you don't, because finding 100 people, signing 100 contracts, coordinating 100 schedules, and resolving 100 disputes adds up to absurd transaction costs. So you form a company, hire those 100 people, and replace market transactions with internal management. The friction gets "internalized."

A company, fundamentally, is a container for friction reduction.

But what happens if AI-Native genuinely shrinks organizations down? What if a 500-person business line becomes a 50-person composite team plus an army of Agents?

The friction surface area of 500 people is enormous: hiring, training, performance reviews, promotions, office politics, cross-team coordination, compliance audits, culture work. Those "internal frictions" are themselves a massive cost. The reason a company is worth existing is that the "external frictions" it eliminates exceed the "internal frictions" it generates.

50 people drops the friction surface dramatically. What about a 10-person, or single-person "company"?

When a group becomes a handful, the friction between that handful and society shrinks from a large fire to a few sparks. At that point, the value of "company" as a friction-reduction container decays rapidly.

So what's the ultimate form of AI-Native?

Possibly something we can't fully see yet: a small core of human talent, plus a dispatchable swarm of AI Agents, interfacing directly with the market through "project-based" or "collaboration network" arrangements. No pyramid. No departments. None of the middle layer that exists purely to coordinate hundreds of people.

Sounds like science fiction. But trace back through every layer of the model: AI writes your code, AI enters the workflow, AI recognizes risk, AI closes the loop, the organization becomes AI-friendly. Each layer strips away something that existed only to "coordinate a group of people." Strip past Layer 5 and keep going, and what you're stripping is the company itself.

I don't know whether our generation will actually live to see that day. But I can already feel the direction of the gradient.

Pushing AI-Native through an organization of several thousand people, every step forward triggers the same voice in my head: are you helping the company become more efficient, or are you making the company less necessary?

This question doesn't have an answer. I'm not going to manufacture one. But everyone walking the AI-Native road will, sooner or later, have to face it.

Notes in the Margin

Looking back across the five layers and the unnumbered question after them, what's actually changing isn't the technology. It's the shape of the question.

Layer 1 asks: can AI write code? Layer 2: can AI enter the workflow? Layer 3: can AI recognize risk? Layer 4: can AI close the loop? Layer 5: can the organization let AI close the loop? After Layer 5: once the organization actually changes, does the company itself still need to exist?

The first four are problems a single function can drive. The fifth isn't. Layer 5 requires the CEO, CTO, HR, legal, compliance, and finance to remake the company together: rewriting process documentation, redrawing permissions, redefining KPIs, redefining roles. Most of this gets deferred. It's hard, the short-term ROI is invisible, and every change touches departmental fiefdoms.

The question after Layer 5 sits beyond any single role's reach. It's beyond what a single CEO can decide. It's a question this company, this industry, and this generation have to face together.

But you can't actually defer it. The AI-Native window won't stay open forever. When your competitor is operating stably at Layers 3 and 4 while you're still hiring people to fill in meeting notes at Layer 2, the gap between you isn't technical. It's a generational gap in how the organization is built. Push the timeline further: when your competitor has started redrawing functions, merging departments, and flattening their org chart, while you're still defending a traditional pyramid, the gap stops being a generational gap. It becomes a species-level gap.

I'm under no illusion that drawing the map is the same as walking it. My team is the proof. We tasted the wins at Layer 2, got stuck at the "context" wall for two quarters, barely have a Layer 3 prototype running in dev, can only imagine Layer 4 in our heads, every Layer 5 question is biting us right now, and the question past Layer 5 we haven't even started touching.

If this map can leave anything to those walking the same stretch of road, it's one line: don't wait for "AI to mature." The people who wait won't win. The people who walk and edit the map at the same time will. This matters more in heavyweight environments with deep hierarchy and slow processes, because every step there is three times slower than at a startup. The earlier you move, the earlier you start clearing the decade-old debt that's been deferred, and the better your chance of standing at Layer 3 or Layer 4 when the window starts closing.

Those 40 minutes every morning batch-processing the inbox might look like a side effect of a new way of working. It's actually a new organizational form leaning over me, asking for a decision.

Every time I shut my laptop and head into a meeting, I find myself asking: will the time I spend managing those machine subordinates one day exceed the time I spend managing humans? If it will, this company's shape is going to need redrawing.

And one layer deeper: that redrawn shape may not be a "company" at all anymore.